For the past month I've been working with some audiophile friends on a project to try and expose audio spectrum data to JavaScript from Firefox’s audio element. Today I finally have something more than raw numbers to show off.

When I last wrote about our experiments with the <audio> element in part V, I had successfully written a patch to add a new DOM event (onAudioWritten), which is dispatched every time a frame of audio is decoded (this works for <audio> and <video>). I make this raw data available via a list type object called MozAudioData, which exposes _.length _and .item() for accessing the data in script (I'm hoping to move to the new WebGL arrays as soon as Vlad lands them). As I finished up for holidays, I made a very crude but promising demo that proved things were working:

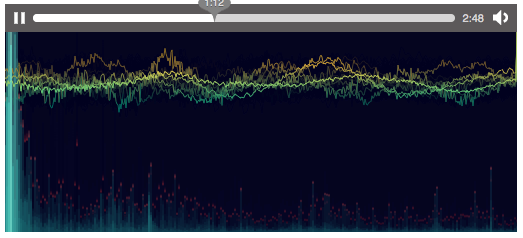

Now it was clear that the data could be had, but we wondered whether it was possible to consume it fast enough in js. Corban Brook decided to try, and wrote a Cooley-Tukey FFT algorithm in JavaScript to work with the raw data in real time. He then wrote a very cool visualization in Processing.js, and the end product is nothing short of amazing (you have to see this video demo of it running on Vimeo to get the full effect):

I want to do some more work to see what moving the FFT into C++ would mean in terms of performance. Having the ability to do even more complex analysis or visualization (imagine real time speech-to-text coming out of <video>) in script is our goal. I'm also starting to wonder about how best to add write capabilities to go with this read-only version. Imagine being able to do equalizing, filtering, etc. on the audio from script. I have no idea how to tackle this yet.

It's been fun working with these guys who know so much about audio. I know almost nothing about the data I'm extracting, and it's humbling to learn from them. We're approaching the point where input on API design would be useful, as I get this patch in better shape for review. If you'd like to get involved or try it out yourself, drop into irc.mozilla.org/processing.js, where we are working, and join the discussion in the bug. I'll continue to blog this series about our work, and I know the others are working on posts and more demos, too.