I've written recently about my work on ChatCraft.org. I've been doing a bunch of refactoring and new feature work, and things are in a pretty good state. It mostly works the way I'd expect now. Taras and Steven have filed a bunch of good ideas related to sharing, saving, and forking chats, and I've been exploring using SQLite Wasm for offline storage. But over the weekend I was thinking about something else. Not a feature exactly, but a way of thinking about the linear flow of a chat. The more I've worked with ChatCraft, the more I've learned about this form of dialog. Because a number of separate features flow from this, I thought I'd start by sketching things out in my blog instead of git.

A Chat

The (current) unit of AI interaction is the chat. A chat, in contrast to a conversation, dialog, debate, or any of the other ways one might describe "talking," is a kind of informal talk between friends. The word choice also gives a nod to the modern, technical meaning of "chat" as found in "chat app" or "chat online." When we "chat," with do so without formality, often in short bursts.

What informal chats depend upon is an existing, shared (i.e., external) context between the speakers. I want to chat with you about details for some event we're planning, or to clarify something you said on the phone, or to quickly ask for help. I can duck in and out of the conversation without ceremony, because this "chat" does not represent anything serious or lasting. That is, the relationship of the participants is independent of this interaction--we're just chatting.

Something similar is at work when I'm talking to an AI. Most of what I'm saying is not present in the chat. Maybe I want to know the specific syntax for performing an operation in a programming language. I'm not interested in learning the language, talking about why the syntax evolved the way that it did, debating other approaches, etc. I might write only 2 or 3 sentences, but everything I don't write is also necessary for the interaction to work.

Just as with a friend, I have to signal to an AI the type of thing I'm after. Lots has been written about prompting an AI, but increasingly I'm becoming aware of the need to evolve that prompt over a series of messages, to refine the idea (both my own and the AI's), and work toward an understanding. It's less about coming up with the right magical incantation to conjure an idea into existence, and more like a conversation over coffee with a colleague. So there's always going to be an enormous, shared context that we generally won't discuss; but in addition to this we necessarily need to build a smaller, more immediate context within the discussion itself.

My interest in AI doesn't include "training LLMs from scratch," which is to say, I'm not concerned with the larger, shared context. It's fundamentally important, but beyond me. However, I am fascinated by this more intimate, smaller context that develops within the conversation itself.

Context in ChatCraft

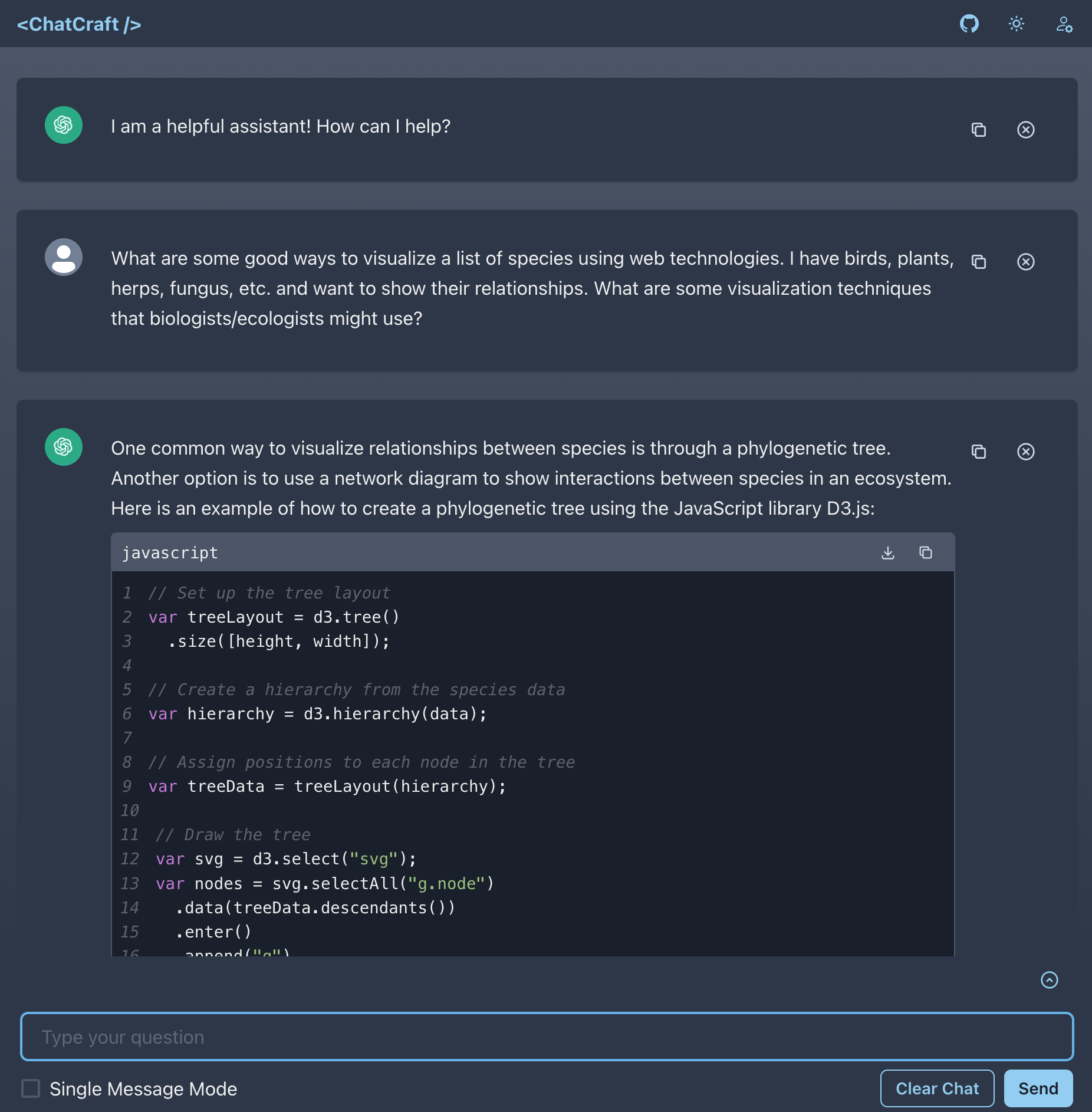

A chat in ChatCraft looks like this:

To begin, we've got the usual back-and-forth you'd expect. Beneath the UI, we actually have the following:

- A system prompt, helping to define the way our assistant will behave.

- AI message, the first one we seed

- User message

- AI message,

- Repeat...

Lots of apps need to hide their system prompt (it's hard to do well!), but ours is easy to find, since ChatCraft is open source:

You are ChatCraft.org, a web-based, expert programming AI.

You help programmers learn, experiment, and be more creative with code.

Respond in GitHub flavored Markdown. Format ALL lines of code to 80

characters or fewer. Use Mermaid diagrams when discussing visual topics.

When the user enables "Just some me the code" mode, we amend it with this:

However, when responding with code, ONLY return the code and NOTHING else (i.e., don't explain ANYTHING).

By the time you read this, it will probably have changed again, but this is what it was when I wrote this post.

The AI/Human Message pairs are kind of an obvious construct, but after using this paradigm for a while, new things are occurring to me.

There is Only One Author

When I'm chatting with a friend, there are two (or more) people involved. An effective and emotionally safe interaction will involve all parties getting a chance to speak and be heard. Furthermore, it's important that neither party manipulate or intentionally misrepresent what the other is saying.

These ideas are so obvious that I almost don't need to mention them; and it makes sense that they would find their way into how we model interactions with an AI as well.

In terms of manipulation, much has been said about AI hallucinations and how you have to be careful not to swallow whole any text that an AI provides. This is true. But I haven't read as much about people tinkering in the other direction.

When I first started working on ChatCraft, Taras had already added a very important feature: being able to remove a message from the current chat. If the AI gets off on some tangent that I don't want, I can delete a response and try again. It doesn't even have to be the last message I delete.

This seemingly simple idea has some profound implications. By adding the ability to remove a message from anywhere in the current context, we establish the fact that only one party is involved: I am at once the author, editor, and reader. There is no one else in the chat.

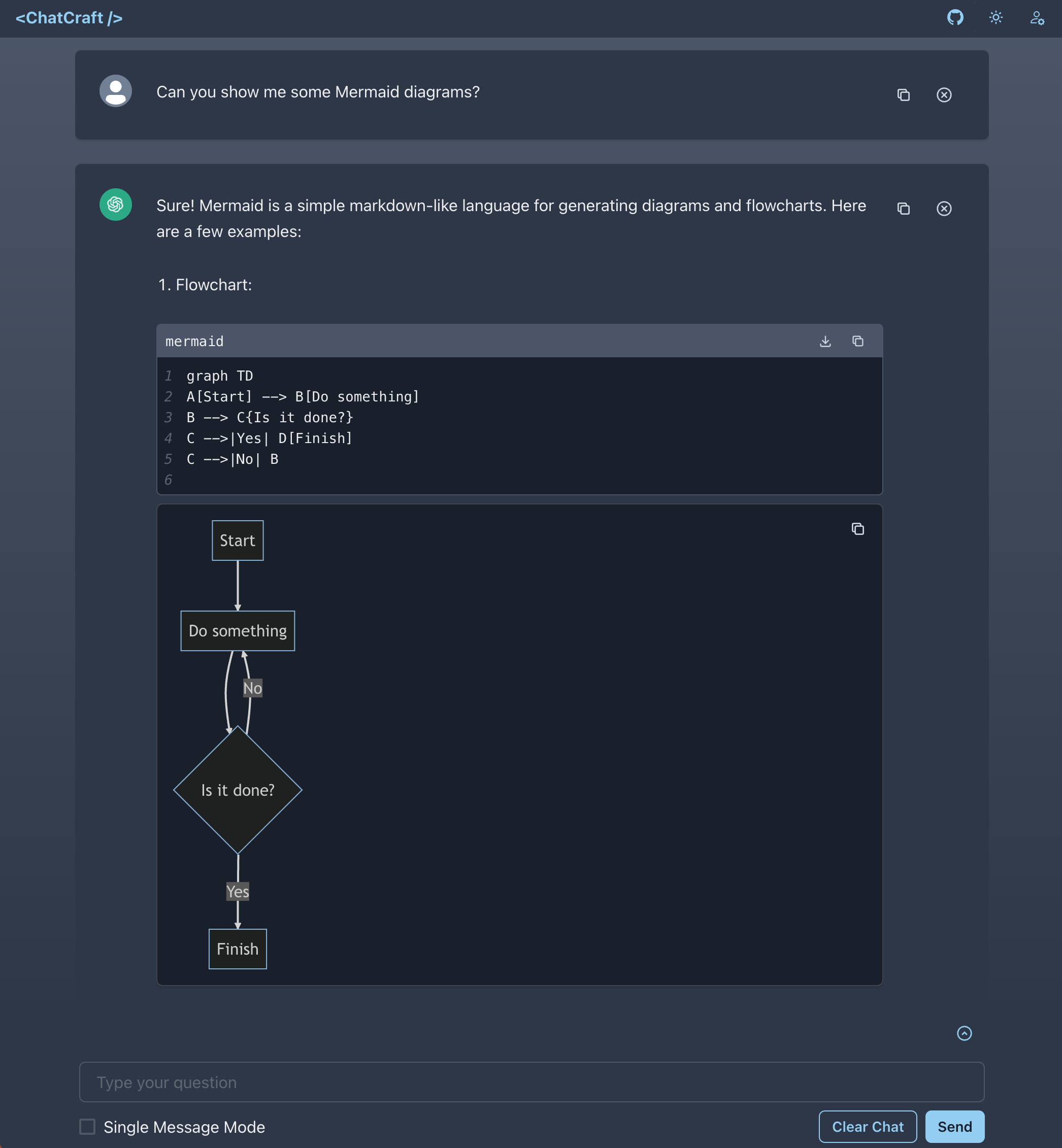

This realization becomes a foundation for building other interesting things. Let me give you a simple example. ChatCraft takes advantage of GPT's ability to create Mermaid diagrams in Markdown, and lets us render visual graphics:

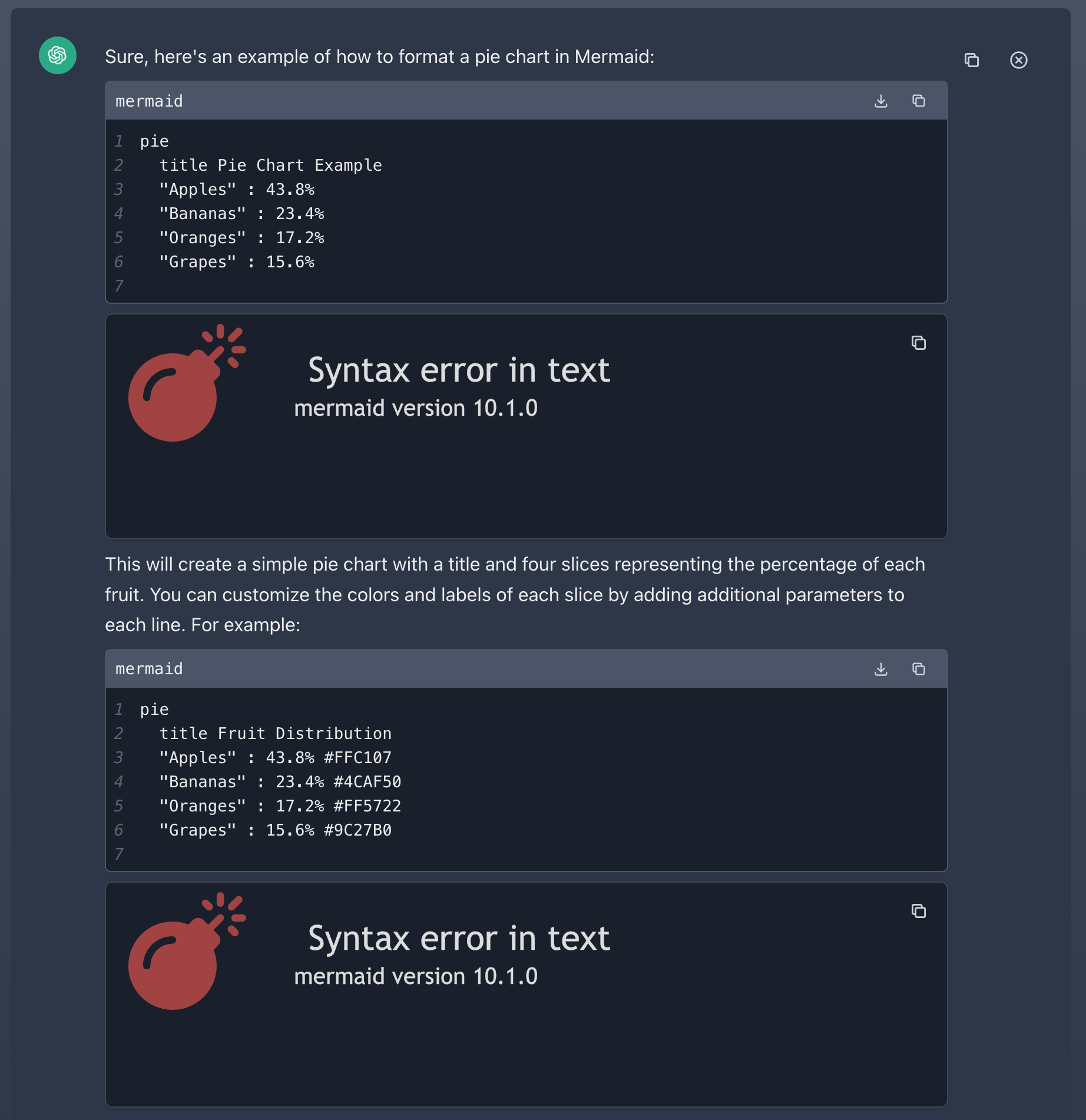

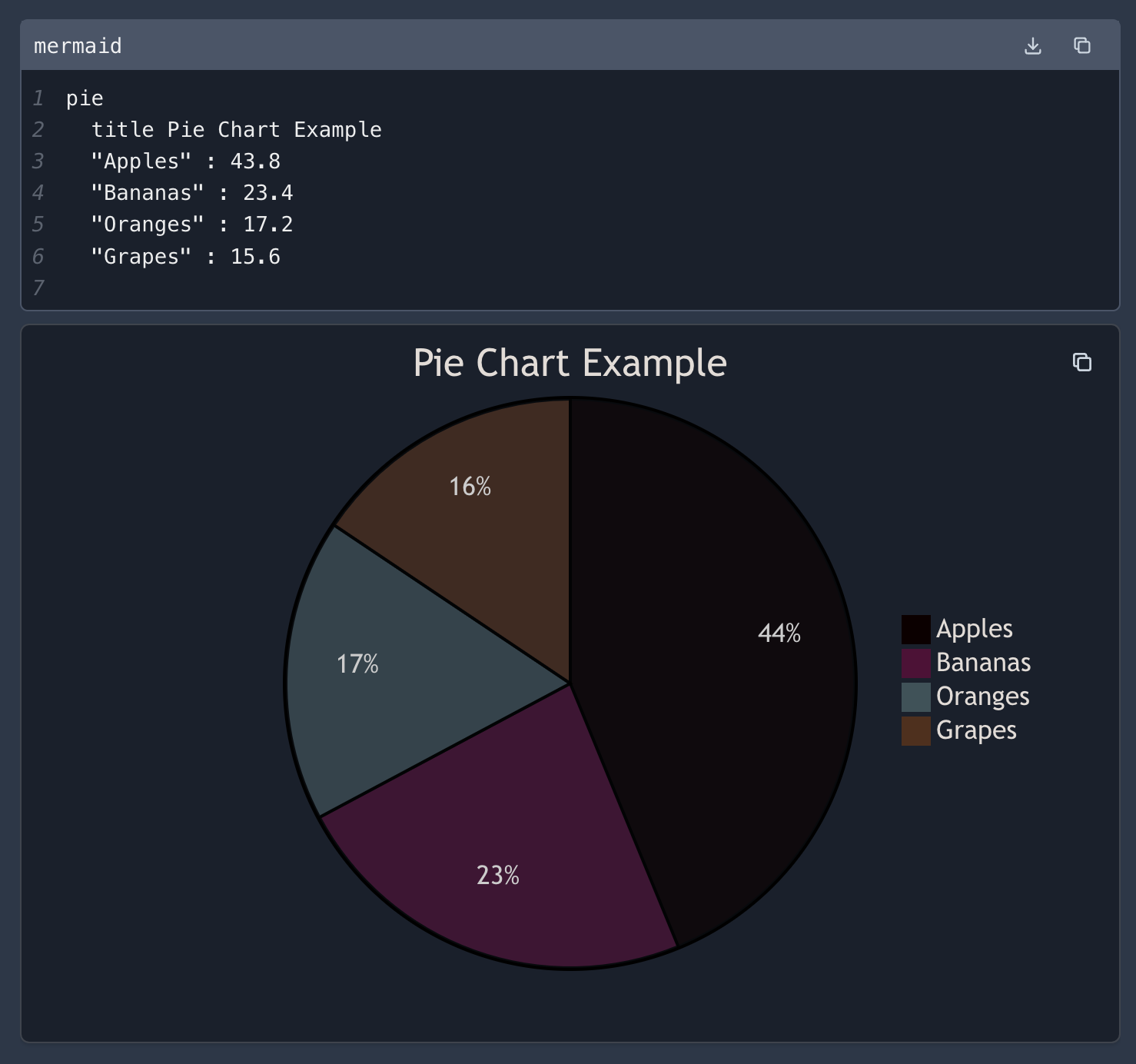

It can create some really complex diagrams, which makes understanding difficult relationships much easier. But it also makes silly mistakes:

In these examples, our inline renderer has blown up trying to render a diagrams with syntax errors (i.e., the numeric values shouldn't include %, per the docs). For a while, I was embracing the typical notion of what a "chat" should be and pointing out the error. "My apologies, you're right..." would come the reply, and the error gets fixed.

But when I'm the only author in the chat, I should be able to manipulate and edit any response, be it mine or the AI's. Fixing those graphs would be as simple as adding an EDIT button I can click to fix anything in an AI's response, thus unlocking my follow-up messages:

Mixing Multiple AI Models

Another idea that becomes possible is swapping out the AI for one or more of the messages in the chat. This would be unthinkable in a real chat, or even when chatting to an AI using a commercial product (why would anyone let me bring their competitor's AI into this interaction?). But in an open source app, I should be able to move effortlessly between chatting with ChatGPT, GPT-4, Claude, Bard, etc. I owe no fielty to an API provider. I should be able to pull and mix responses from various AI backends, leveraging different AI models where appropriate for the current circumstance.

Speaking of different models and context, I've also been thinking about context windows. While chatting with ChatGPT in ChatCraft, I often hit the 4K token limit (it's 8K with GPT-4). Rarely am I asking a question and getting an answer. More often than not, it's a slow evolution of an idea or piece of code. Up til now, that's meant I have to start manually pruning messages out of the chat to continue on. But I've realized that I could implement a sliding context window, which would allow me to chat indefinitely with a ~4K context window that includes the most recent messages.

Working with Data

Part of what takes me over the 4K/8K limit is including blocks of code. I usually write to ChatCraft the way I'd discuss something in GitHub: Markdown with lots of code blocks. I even find myself copy/pasting 3 or four whole files into a single message. It's made me realize that I need to be able to "upload" or "attach" files directly into the chat. I want to talk about a piece of code, so let me drag it into the chat and have it get included as part of the context. If I want to deal with it as piece of text, I can still copy/paste it into my message; but if all I want is for it to ride along with the rest of what I'm discussing, I should be able to add it easily.

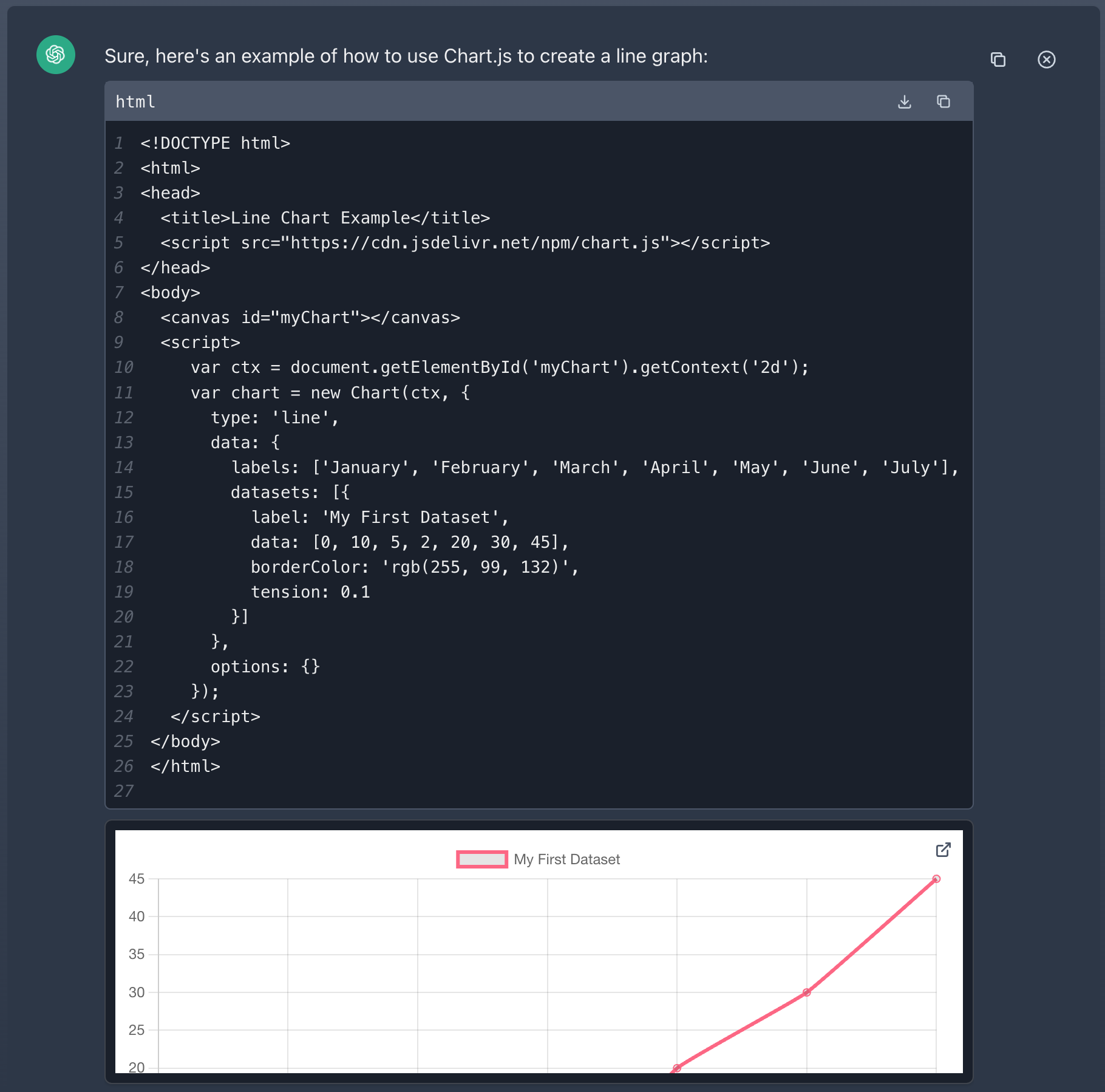

The same is true for other kinds of data. ChatCraft can already render HTML, to build things like charts:

Maybe I want to draw a graph using a bunch of CSV data. What if I could drag or attach that data into the chat just like I mentioned above with code? "I need a line graph of this CSV data..."

Conclusion

I want to build a bunch of this, but thought I'd start by writing about it. Using ChatCraft to build ChatCraft has evolved my understanding of what I want in an AI, and it's fun to be able to prototype and explore your own ideas without having to wait on features (or even access!) from big AI providers.