Today we finished final testing for Processing.js 0.7 and released it for download. It represents a lot of hard work by an ever growing community of developers. This release once again focuses on feature parity with Processing, as well as bug fixes. We've added some big new features, like PImage and image loading, as well as support for Processing's synchronous loading model in the browser via a new language directive. We've also continued to finish WebGL-based 3D support, adding beginCamera, endCamera, curveVertex, dist, curve, ambientLight, pointLight, noLights, directionalLight, and box. Our roadmap for adding full 3D support is just about at an end, which is a great feeling. We've also worked on many parser, language, and API bugs that people have been submitting (thanks to those that are filing them).

This release also introduces another layer of automated tests for the project: ref tests. We've had automated parser and unit tests for quite a few releases (there are over 1,200 tests in 0.7), but we were still having to manually inspect the results of our drawing code; and when you're porting a graphics language, there are a lot of drawing tests. We ended-up implementing an automated canvas 2D and 3D pixel-based ref test system. The process works like this:

- Write a visual test (processing code) and make sure the native Processing (Java) and Processing.js are the same (visual inspection).

- Generate a ref test using a web-based tool. The ref test is made by running the code, then extracting pixel data from the canvas and putting it in a comment in the code. The file is then saved (code + bitmap pixel data) to a .pde file.

- A web-based ref test runner reads in the test files and does 3 things. First, draw the pixels back to a canvas using ImageData and putImageData. Second, run the processing code to produce a live sketch. Third do a visual pixel diff, comparing the two canvases.

Doing a comparison between the pixels of two canvases is easy for 2D, and a bit more complicated for 3D (Andor has a great post about his 3D method in WebGL). Using a reference image generated by another browser on another OS and having it match this browser/OS combo? That's harder. To compensate for differences in rendering and canvas implementations, we apply a Gaussian blur in JavaScript to the two sets of pixels. We then compare the colour values using a colour tolerance. Our initial testing used 2% but further testing points to needing 5% (e.g., 0.02 * 255).

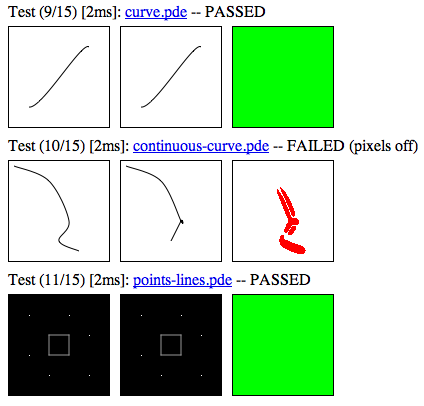

And it works! We only recently got the ref test infrastructure in place, so don't have many tests yet (15 at present). However, today, we caught our first bug using them: a recent check-in that changed curveVertex broke how we interpret control points. Here's what it looked like when it failed (red pixels are differences):

This technique is already saving us a lot of time as we test our code on Firefox, Minefield, Safari, Chrome, Opera and on Windows, Linux, Mac, and mobile OSes. I think other people doing visual work with canvas should explore this method. You can try it out for yourself here, and see code here. Thanks to Andor, Yury, and Corban for their work with me to get this working.